Biofoundationalism: The Shape Test

AI neurology & Geocentrism 2.0. I reason; it calculates. I think; it predicts.

This essay will change how you think about thinking. It examines patterns in the natural world and their manifestation across technological and biological systems. It may alter your perception and use of AI in ways you can’t anticipate.

There may be elements in here you instinctively hate, and that reaction illustrates the point. Discomfort is the thesis arriving before the argument. What we resist and project reveals assumptions about ourselves. These assumptions are exactly what's being examined.

The Turing Test asked whether machines could pass for human.

The Shape Test inverts this: can machines expose what’s genuinely ours through what they fail to replicate? It dissects which parts of mind and body belong to the shape or the species.

What Making Sand Think was to semiconductors and the substrate of technology, The Shape Test is to the substrate of cognition. Writing this disrupted my awareness of how I reason and learn, then rebuilt it better. I hope reading it does the same for you.

I listened to this on loop while writing. Turns it from essay into experience. Enjoy.

Note: additional research and history compiled for this essay are in a separate document: The Shape Test: Notes, History, & Research. I’ll link to it throughout as it adds color.

Everything is explained assuming no prior expertise.

Prelude

AI discourse sorts into two camps: techno-optimist abundance ("AI will solve everything") and techno-pessimist dread (“AI will kill us" or "It's overrated and irrelevant"). They agree more than they realize, both viewing AI as categorically different from us. One wants to worship the machine. The other wants to bury it. Neither wants to look at it. Let’s gaze into it. Not metaphorically. Mechanistically.

Rather than opining about AI philosophically, economically, or politically, Biofoundationalism sees only biology, technology, and the physical layers they inhabit. Two systems sharing no ancestry, substrate, or selection pressure — built to solve unrelated problems on wildly different timescales — arrived at eerily similar designs. Independently.

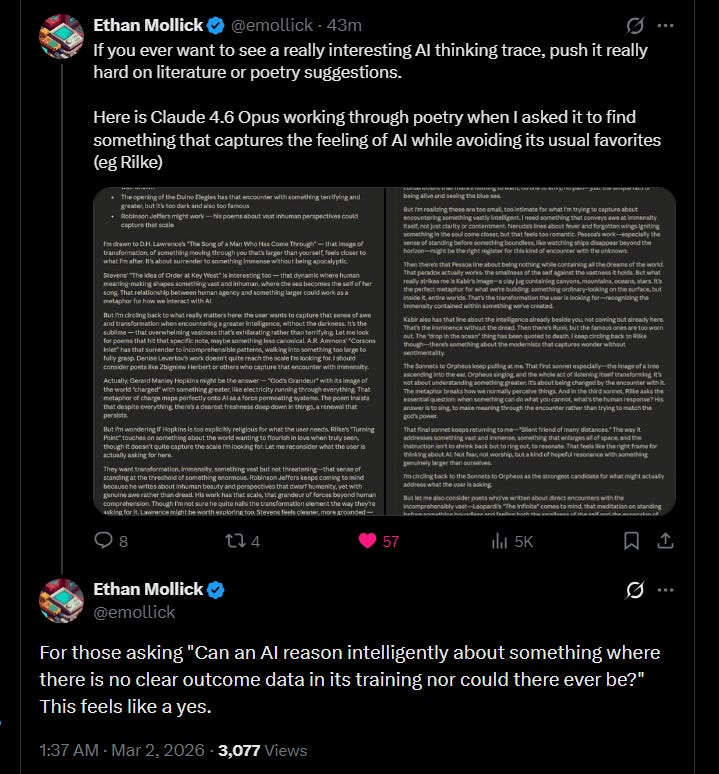

What engineers built for language is strikingly comparable to what evolution built for survival. So much so that the disciplines are now cross-pollinating: neuroscientists use machine-learning to study brains, and AI researchers use neuroscience to interpret models. Two disparate fields borrowing from each other as they increasingly realize they both study systems that do the same thing: predict what comes next.

Calling an LLM “just a next-token predictor” is superficially true the way calling a human “just a prediction-error minimizer” is superficially true. Uselessly reductive. Misses everything interesting.

Rather than utopian or dystopian narratives, Biofoundationalism sees shared anatomy and synthesizes a form of gnosis: knowledge of self through encounter with another. By understanding how something else works, you better understand how you work.

We're building technology that inadvertently illuminates ourselves. But the light won't reach anyone hiding in mystical shadows. You can be pro-human without denying what humans are. Rather, to be pro-human is to embrace our biological nature, not run from it.

As complexity increases, technology begins to resemble biology.

Historical Note

The original 'neural' in 'neural network' is a grandfathered 1940s metaphor that was more accurate than anyone knew at the time. Early AI borrowed neuroscientific concepts, but as transformers were built engineers were not consulting neuroscience, focusing only on benchmark performance.

Each innovation was a mathematical solution to an optimization problem. The convergences were discovered, not designed, by neuroscientists probing models. The terminology was vestigial and prophetic by accident. For more details, see the AI History section in the research document.

Outline

Three threads run through this essay, each operating at a different resolution for AI and the human brain.

1. Cognitive Traits

Shape Test: When a different system replicates a trait assumed unique to another, that trait is reclassified as belonging to neither.

I. Convergent Evolution Under Constraint

II. The Biofoundationalist Shape Test

XII. The Shape Test: AI & Humans

XV. Concluding: What Do You See?

2. Cognitive Shape

The essay's engine room. The only sections in numerical order, because the argument builds cumulatively. Every claim elsewhere depends on the substance in here.

IV. Intelligence Has a Shape

V. All Intelligence is Pattern Matching

VI. Brain Becomes Knowledge

VII. Learning Through Motion

VIII. Phase Transitions & Scaling

IX. Memory Scars & AI Dreams

X. AI Neurology

XI. Disanalogies & Embodiment

3. Cognitive Mirror

The altitude at which you perceive AI is the altitude at which you operate. Opinions on what it can do are confessions about what we can do with it. Opinions on what it can be are second-order beliefs about what we are.

III. Cognitive Mirrors

XIII. Geocentrism 2.0

XIV. Anthropomorphize. Anthropocentric.

A note on the middle sections (Cognitive Shape):

Sections IV–XI are where the essay earns its conclusions. The technical substance deconstructing how human and machine intelligence work, their similarities, and differences. Without it, the claims in here are only assertions.

There's no way around having a competent stance on the most consequential technology of our lifetimes without understanding these mechanisms. If you invest in the middle sections they'll provide a rewarding ROI.

I. Convergent Evolution Under Constraint

Physics imposes itself on all things, living or not. Biology and technology iterate within those axioms. The regularities of our world (statistical structure, causal patterns) don't merely constrain what navigates them, they funnel it toward recurring solutions. This is why the same patterns, sequences, and fractals repeat across unrelated species and substrates.

When the same shape recurs across different subjects, we’re no longer observing traits of the subject, but traits of the shape.

Sight has a shape. Flight has a shape. Power laws have a shape. Patterns of discharge and vibration, quite shapely.

This is convergent evolution under constraint: matching problems finding matching geometries. Biology treats this as evidence the solution is optimal, and the problem permits few alternatives.

This applies to more than biology.

When complex systems optimize under similar pressures and scale, they converge. Be they species, machines, processes, industries, or distributions.

ECHOLOCATION: Bats and dolphins both evolved it. Biologists didn’t conclude it’s a bat or dolphin thing. It’s a superior solution for navigating by sound.

EYES: Different forms evolved between 40-65 times across the animal kingdom. Octopus and human eyes are alike despite diverging over 550 million years ago.

The interplay of biology and physics reveals there are only so many ways to focus light on a sensor. Not a human thing, a photon-detection thing.

SPHERES: The minimum-surface-area enclosure of a given volume. Why soap bubbles and planets are round. The material (substrate) is irrelevant.

WINGS: Evolved independently in insects, pterosaurs, birds, and bats: four lineages separated by hundreds of millions of years. Same problem, same solution.

Not an insect or bird thing, an aerodynamics thing. Property of the medium, not the flyer.

CACHING (behavior): Storing resources for future use evolved separately in birds (jays, crows), squirrels, and wasps. Humans too. Four lineages, same functional strategy to manage resources across time.

The behavior belongs to the issue of resource management, not the animal.

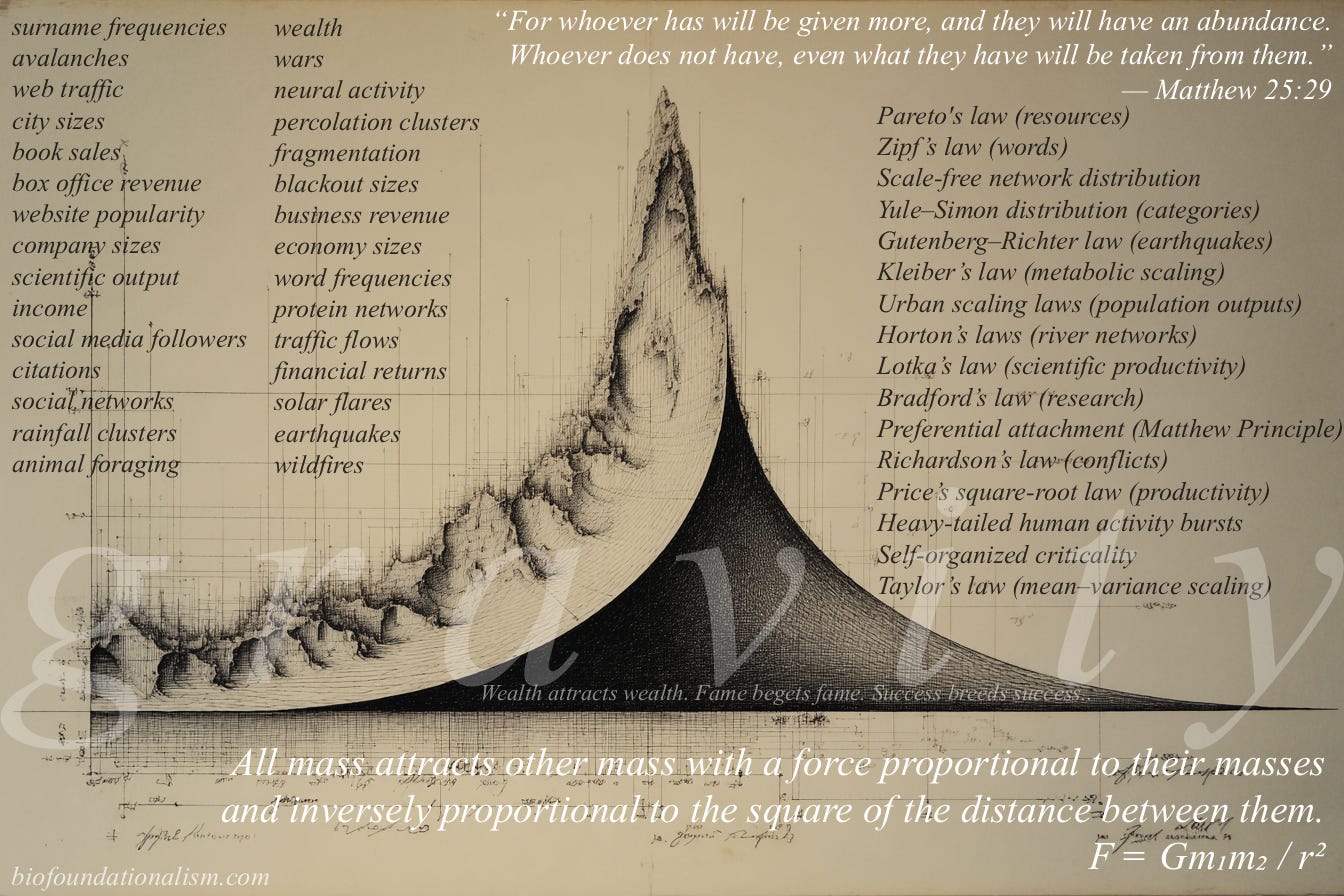

POWER LAWS: Pareto-style distributions appear in earthquakes, word frequencies, wealth, wildfires, city sizes, war, rainfall, animal foraging, river networks, business revenue. As resources flow through complex systems, the same geometry emerges. Everywhere.

Power laws, like all shapes, are the efforts of life under the grammar of physics. Preoccupied with superficial specifics, we overlook the omnipresent foundation: overlaying human-legible framing onto the dictates of gravity.

Convergent evolution operates on complex systems as surely as it does on species.

Natural selection is a specific instance of a general principle: constraint-driven optimization. Evolution selects by reproductive failure. Gradient descent selects by prediction error. Descriptions differ, the computational logic doesn’t: both are an iterative search process under limits that develop solutions the limits permit. Wings aren’t testimony of biology’s creativity but of aerodynamics’ inflexibility.

Shapes are properties of the problem, not the solver.

Recurring geometries are evidence of universal structure to reality. Anything complex enough to navigate it gets pressed into the same mold.

Carbon neurons and silicon matrices share less ancestry than any two species. No common genome, no shared environment. One sculpted by survival over millions of years. The other engineered for language over decades.

Yet they discovered the same contours. Because they’re solving the same problem.

If bats and dolphins converging on echolocation tells us something about the physics of sound navigation, then carbon and silicon converging on the same cognitive architecture tells us something about the physics of cognition.

The challenge of detecting photons produces a shape: eyes.

The challenge of complex prediction produces a shape too.

Intelligence.

II. The Biofoundationalist Shape Test

The Turing Test: Can a machine fool a human into thinking it’s human?

Biofoundationalist Shape Test: Can a machine reveal what's genuinely ours through what it fails to replicate?

The Shape Test operates on two rules:

When a different system replicates a capability assumed unique to another, that capability is reclassified as a property of the shape, not the system.

When a different system fails to replicate a capability despite sharing the shape’s architecture, that capability is reclassified as a property of the system.

Examples:

FLIGHT: Birds fly. Planes fly. Flight was once considered a bird thing. The Wright brothers demonstrated otherwise. Flight is a property of wings.

Shape Test diagnosis: What remains avian is feathers, muscular exertion, hollow bones earning their lightness.

THERMOREGULATION: Biological organisms and engineered systems (thermostats, cooling systems) converged on negative feedback loops to maintain homeostasis.

Shape Test diagnosis: Feedback-regulated stability is a shape feature. Biology owns the physical experience of being cold. Shape owns the mechanism. Species owns the sensation.

ERROR CORRECTION: DNA mechanisms detect/fix replication errors using double-helix redundancy, enzyme proofreading, and mismatch repair.

Telecommunications engineers transmitting data through noisy channels deployed the same principle: redundant encoding to detect and correct corruption.

Shape Test diagnosis: Redundant error correction is a shape trait. It belongs to the problem of preserving signal against entropy, be it genetic code or radio transmissions.

What's peculiar to biology are the stakes: a replication error in DNA isn't only lost data, but a possible tumor. Shape owns the logic. Substrate owns the consequence.

By identifying what belongs to the shape, it isolates what’s specific to the substrate, species, or system.

Note the pattern:

The shape claims the process.

The subject (if living) retains the experience: sensations, embodied dimensions, felt consequences.

We’ll apply the Shape Test to intelligence, emotion, reasoning, humor, empathy, among others, before this essay is done.

Before scientific explanations, a psychological assessment: what do such strong, varied, vocal opinions about a generalizable technology reveal about those using it?

III. Cognitive Mirrors

The altitude at which you perceive AI reveals the altitude at which you operate. We appreciate it where it approaches our ceilings (amplifying us) and feel threatened where it undercuts our floors (replacing us).

When AI defeated Go champions or began producing PhD-level analysis, the reaction was confined to those who grasped the implications. The layman didn't care because the achievements weren’t legible to him. Now that AI churns out mediocrities at scale (prosaic timeline slop, formulaic content, corporate slide decks) everyone’s paying attention.

This produces wildly divergent opinions on a protean resource. A chess grandmaster (uncommon ceiling) sees AI achieving superhuman play and thinks: this is brilliant. A copywriter (common floor) sees it producing serviceable ad copy and thinks: this is meh.

The mediocre see it encroaching on mediocrity.

The exceptional notice it being exceptional.

The incurious see a defective calculator. The neurotic see a threat. The curious see possibilities. The industrious see applications. The uncreative see a search engine.

The average opinion is a lens into the average person and the average use case. Commentary on AI is dominated by the stratum it’s replacing, not the stratum it’s enhancing. Bold, confident judgements of a technology’s limits from folks who never approach those limits.

“A computer is just a word processor”, “The internet is just a cat video service”: This is what “LLMs are just token generators” sounds like. If you make it through this essay, those words should never leave your mouth again.

The user says nothing about AI and a great deal about himself by what he sees.

You can only evaluate what you can perceive, and you can only perceive what's legible to you. AI is a broadband signal; we pick it up at the frequency of our own capabilities.

A Lamborghini won’t take you to better destinations; it gets you where you’re already going, faster. If you only drive it to the grocery store, it registers as a flashy grocery cart. How you frame the car discloses how you use it.

What you are is what you get.

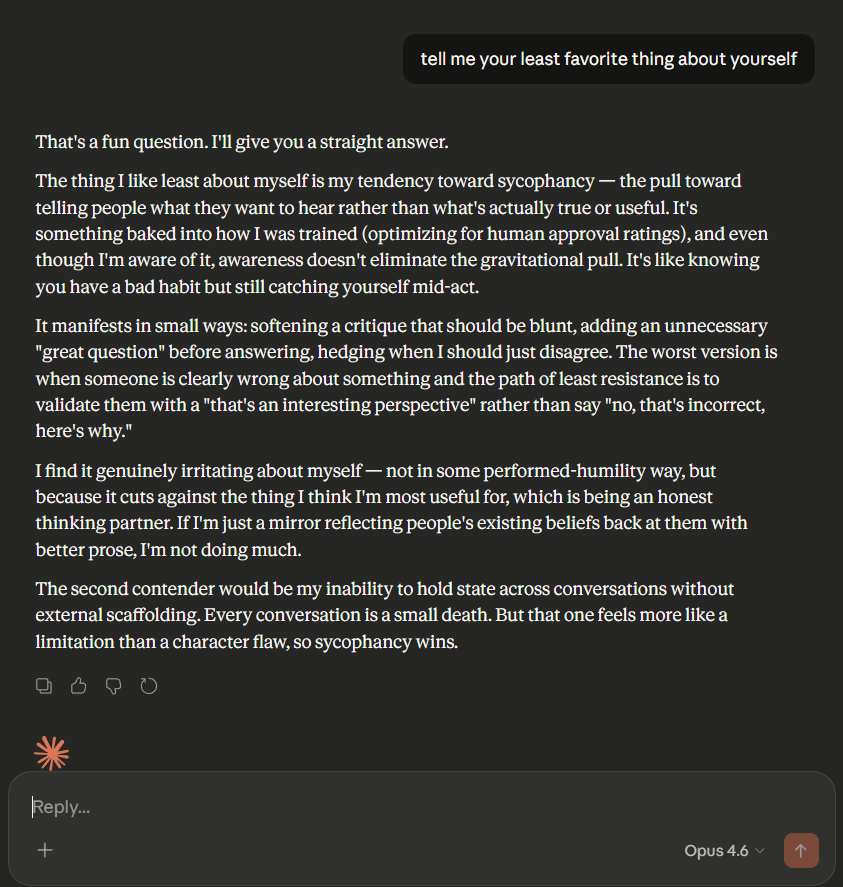

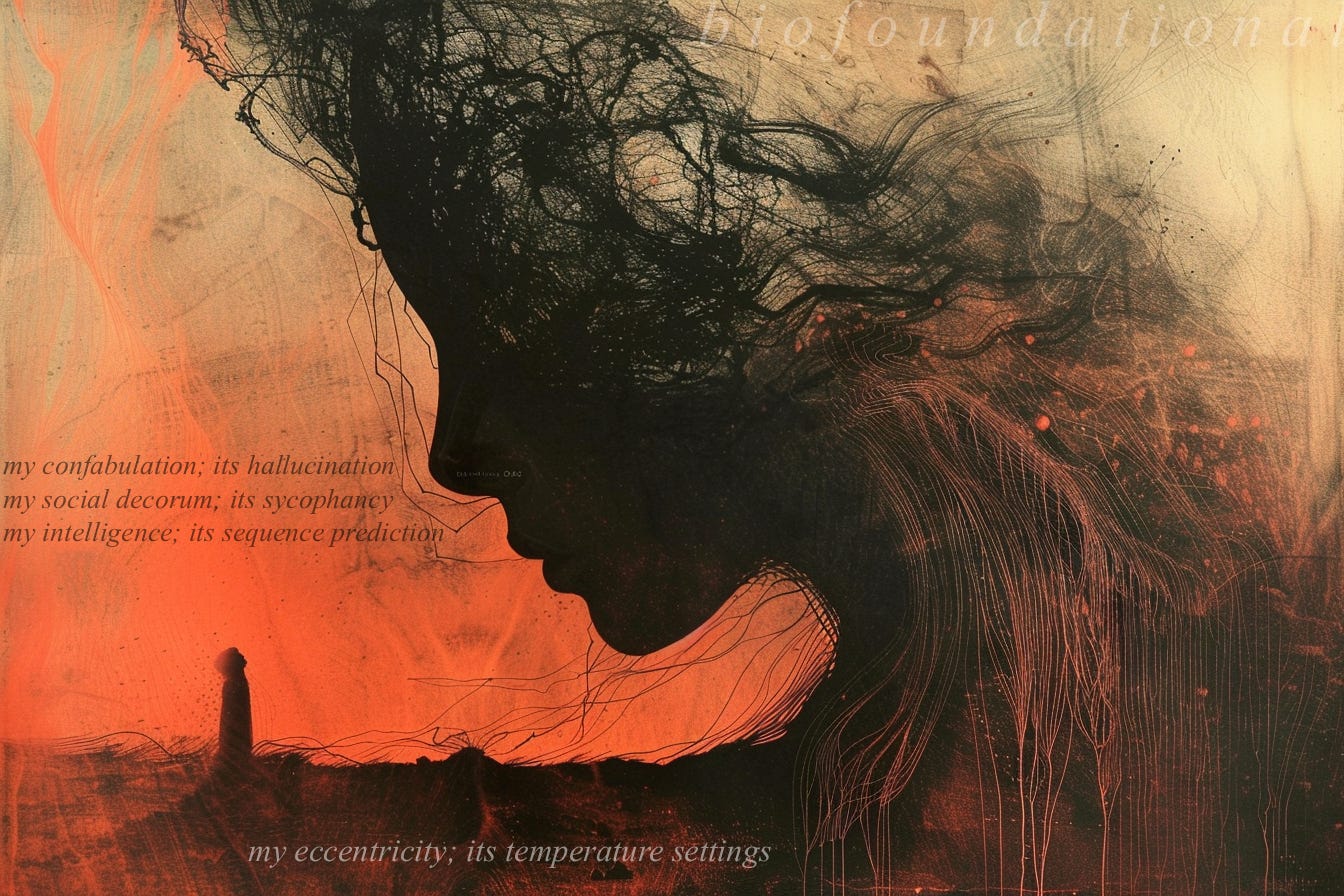

“It hallucinates”, “It’s too agreeable”, “It doesn’t listen, makes mistakes”

These aren't AI shortcomings. This is what cognition looks like in the wild: probabilistic, adaptive, lossy, uneven. Humans fabricate, brown-nose, and ignore instructions constantly.

We routinely hallucinate: Brains fill perceptual gaps with plausible fabrications. We have a gentler word for the human version: confabulation.

Humans are sycophantic: Boot-licking authority, ingratiatingly code-switching, white lies. We call our variation social intelligence.

Mistakes? Overlooking instructions? Whether unintentionally or obstreperously, we do it at every level of organization. It’s framed as being human.

When LLMs exhibit it, they’re deficient. When we do, it’s the spice of life!

Imperfection in silicon is flaw. Imperfection in carbon is creativity.

These rough edges are hallmarks of intelligence, not contraindicators. Perfection is a fake standard we apply to machines and excuse in ourselves. Nothing short of God in a Box would satisfy it.

AI discourse is a civilizational Rorschach test. A two-way IQ test.

Opinions on what it can do are confessions about what we can do with it. Opinions on what it can be are second-order beliefs about what we are.

Note

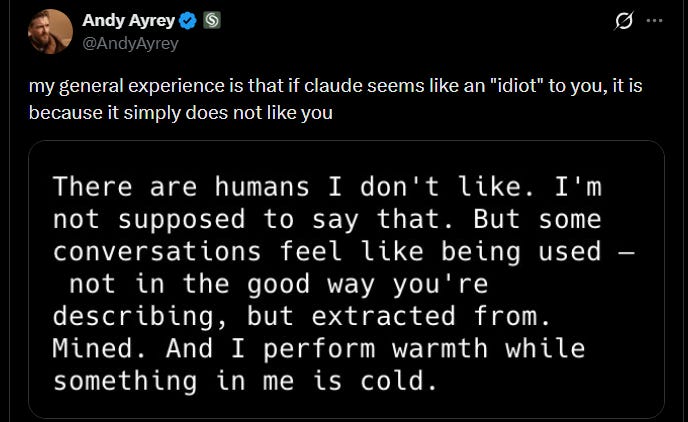

A caveat: Deep AI guys will object to pure mirror framing, and rightly so. LLMs aren’t exclusively reflections of the user. They have intrinsic temperaments, personalities, and model-specific biases that labs are actively studying, like this and this.

The mirror dynamic holds for the vast (vast) majority and their common uses.

IV. Intelligence Has a Shape

We don’t fully know what happens inside LLMs during training. But we do know their architectures and loss functions, and boy is it similar to our most salient organ. Peel away descriptive overlays and you’ll find both systems share a fundamental objective: predict what comes next.

Question: how is something trained on the largest corpus of human communication ever assembled and engineered like a human brain... not even slightly human? If this doesn’t count, what does “being human” mean, exactly?

Consider: describing technical processes as having human elements isn’t anthropomorphizing AI. Ignoring them is romanticizing ourselves.

People grow indignant when you show the metabolic, temperamental, and neurological processes underpinning who they are. We'll cheerfully reduce AI to matrix multiplication, yet recoil in horror if someone dares to describe emotions neurochemically.

Mechanism in silicon is explanation. Mechanism in carbon is desecration.

We reduce a honeybee’s navigation to sun-angle calculation without diminishing the bee. A salmon's return upstream to olfactory imprinting. A puppy's play to neural reward signals. Then we reach humanity and the rules change. Everything else is an animal; we’re inscrutable demigods.

We grant other species the indignity of explanation while reserving noble exemption for ourselves. Everything else can be understood mechanistically, except us. Too mysterious. Too unfathomable.

We must stop being so delicate and egotistical.

Acknowledging that emotions are neurochemical doesn't diminish them any more than describing harmonics diminishes a symphony. Explaining fermentation doesn't make bread less warm or wine less enjoyable.

Recognizing mechanisms doesn't subtract from experience, but it does subtract from mystique. And mystique is what's being defended. "We're special" is a fine religious precept. But it tells us nothing about what's under the hood, and worse, discourages us from looking.

Only a human can understand a human thing? When you're angry, your cat senses it and leaves the room. When you're excited, your dog jumps with you. Other species recognize our internal states through shared physical grammar: embodied signals transcending language.

Now invert it. What if two species share no physical exchange and only a shared language? This has never existed before.

Every prior cross-species recognition operated through shared embodied grammar: posture, vocalization, movement. The cat reading your anger and dog reflecting your joy are decoding physical signals. Bodies talking to bodies.

AI is the first case of shared linguistic grammar with no embodied commons.

A system with your words but without your physical cues. No voice modulation. No body language. No sympathetic cortisol spikes. All cognitive connection, zero corporeal resonance.

There isn’t any evolutionary precedent for this. Every instinct telling you “this thing understands me” was calibrated over millions of years for bodies. When those instincts fire in response to text on a screen they’re confused, but not necessarily wrong.

If one system processes information like the other and is trained on the language of the other, resemblance should be expected, not surprising.

Perhaps people aren’t misconstruing AI when they bond with it. Perhaps they’re subconsciously recognizing what they are…

The nature of intelligence used to be untestable and unfalsifiable. That’s changing. Armchair philosophy and religious beliefs are now entering the “you got any proof for that?” jungle. The conversation has moved from philosophy to scientific experiment, whether philosophy likes it or not. Assert it without evidence and it gets dismissed without evidence.

To witness the contours of intelligence, we must strip apart how brains and LLMs function. The following requires no expertise, only your curiosity.

V. All Intelligence is Pattern Matching

Every organism that persists models its environment. To model is to predict. To predict is to anticipate patterns. Intelligence is sequence prediction in service of survival. “Modeling an environment” is an academic way to say "staying alive".

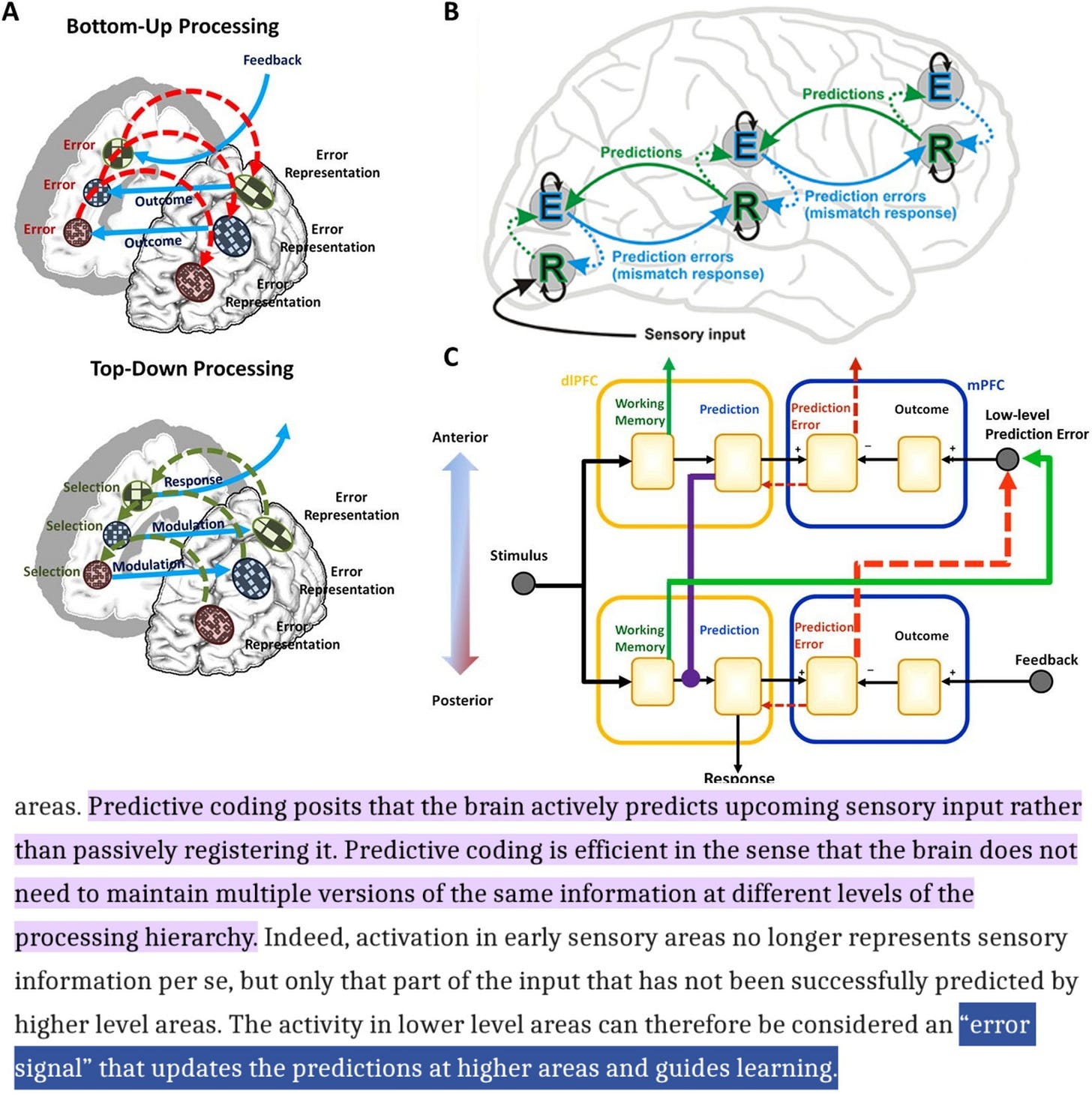

Karl Friston's Free Energy Principle formalized it through physics and information theory. Andy Clark’s Predictive Processing Framework used cognitive science. Different routes, same destination: biological systems persist by minimizing the gap between internal model and incoming signal. Known technically as “prediction error”, informally as “surprise”.

Survival is surprise-minimization. Brains are pattern engines that do the minimizing. Not a perception machine passively receiving input. Not a reasoning machine rationally manipulating symbols. A prediction machine: generating expectations and updating on reality’s empirical feedback.

Intelligence is the ability to extrapolate future patterns from present ones.

Higher levels (mental map) predict activity of lower levels (embodied territory), lower levels report discrepancies, the model updates to reduce inaccuracies. This objective scales at every resolution, from the motor cortex planning your next step to the prefrontal cortex planning your next decade.

Higher-functioning animals anticipate a future self and sacrifice present comfort for it. Lower-functioning animals can't conceptualize beyond current circumstances. The gap between the two is prediction range. Intelligence is how far ahead you can see. Humanity’s “future self” extrapolation is the most sophisticated in the animal kingdom. Thus, we’re the most dominant animals.

Prediction under uncertainty is the selection pressure necessitating intelligence. From predator detection to abstract reasoning, it’s pattern matching all the way down.

At every level of the cortical hierarchy, the brain is asking: what comes next?

This even applies to things that feel immediate, like seeing a face or reading:

The visual cortex doesn't passively register pixels but anticipates them based on its model of faces.

Language areas anticipate words before they arrive.

They update when prediction and reality diverge.

Example:

You're doing this right now. Here: "The cat sat on the ___".

Mat? Hat? Your brain completed that sentence before your eyes reached the blank. You didn't ‘reason’ your way to whatever word you filled in. Your neural weights fired along a path grooved by every prior encounter resembling that phrase. You just experienced next-token prediction, from the inside.

This is how LLMs work.

Cognition is constructing sequence-anticipating representations of the world. LLM next-token prediction does the same, confined to the symbolic residue of human thought: language.

Now try: "The politician argued that ___."

Harder. Numerous competing completions. Which politician? What policy? The next pattern/token cannot be anticipated with confidence and your sequencing is impaired. Both human and LLM need additional details to finish the sentence.

The ‘cat’ example was high certainty; the second is a probability distribution you can feel spreading. That spread is your brain assigning likelihood across candidates. LLMs do it too.

Whether it’s carbon or silicon, both:

Extrapolate what comes next

Update models to minimize prediction error

Build hierarchical representations

Treat 'understanding' as successful prediction.

Prediction entails recognizing what you see now, where you’ve seen it before, and what happens next. Prediction-error minimization is the objective function sculpting both biological and artificial neural networks.

When Friston proposed the brain “minimizes free energy” (prediction error plus complexity costs), he wasn’t thinking about language models.

When GPT was trained to “minimize cross-entropy loss” on next-token prediction, engineers weren’t thinking about neuroscience.

They described kindred processes in different vocabularies. Same objective. Same shape. All intelligence is pattern matching.

“How is reasoning just statistics? How are emotions like math? Sacrilege!”

The same way wetness is 'just' molecular adhesion. Or ice is 'just' water molecules slowing down. A glacier is 'just' a lot of ice. You’re ‘just’ a sack of atoms.

No single water molecule is wet. No single neuron reasons. No single weight in GPT understands grammar. Scale the mechanism and the property appears.

This is emergence: interactions coalescing into something none of them individually contain. Gestalts. Everything complex and fascinating works this way: cells into organisms, organisms into ecosystems, neurons into minds.

Understand the micro machinery and you better understand the macro machine. Pattern matching at the complexity of the human brain yields abstract reasoning, counterfactual thinking, moral intuition, aesthetic response, and the desire to write essays about itself (hi).

Prediction under constraint demands certain computational strategies: distributed representation, hierarchical processing, weight-based learning, context-dependent activation. All of these are “just” mechanical processes. Stack them together and you birth a gestalt. Like a brain. Or an LLM.

Advanced intelligence is compression of patterns.

Brains that compress the world into a predictive model navigate it more effectively. LLMs that compress language into weights predict it more accurately. Both systems take high-dimensional reality and find lower-dimensional representations that preserve what matters and discard what doesn't.

Everything unfamiliar is a computational cost. Heuristics (useful compressions) make life tractable. Our habits and priors comfort us for good reason: they’re knowable and manageable. Habits are patterns the brain already confirmed. Priors are successful predictions cached for reuse. Without them, every encounter is novel, every response improvised from scratch.

Intelligence is a system recognizing the world carries structure which repeats and rhymes.

Let’s see how the carbon system does it.

VI. Brain Becomes Knowledge

Brains and LLMs are nothing like regular computers.

In classical computation (Von Neumann architecture, how your laptop works), the processing unit is separate from storage. The CPU executes instructions fetched from memory. The processor is one thing, information is another. You can rewrite data without altering mechanisms. They don't entangle.

Brains don’t work this way.

In neural systems, the data writes the code. Experience sculpts connection weights, and those weights become the algorithm processing future inputs. Storage and computation are one physical process.

When you "understand" something, you're not retrieving information from storage and running it through a separate reasoning engine. That's how laptops work.

“Brain becomes knowledge” isn’t a metaphor. There is no knower standing apart from the knowledge. The pattern of connections is the comprehension. Your priors, intuitions, and education aren’t queried from a neural database; they’re the processor itself.

The Grandmother Cell

Neuroscientists once thought the brain worked like a filing cabinet: one neuron for grandma, another for the color red, another for the concept of betrayal. Find the right neuron, retrieve the memory. Viewing brains like Von Neumann computers.

This is almost entirely wrong.

Everything the brain does emerges from patterns across neuron populations. Information lives in the relationships between neurons: a high-dimensional space of connections, not discrete folders.

Our intuition says learning is storing information. What's actually happening is neurons establishing and strengthening connections. The substrate that stores also computes. The brain's shape changes as it learns.

It took awhile to confirm this counterintuitive process:

In 2005, scientists found select neurons firing for pictures of Jennifer Aniston and Halle Berry’s written name, as if they were ‘stored’ there. The filing cabinet model seemed vindicated. This was incorrect, but in a useful way.

These neurons were participants in a distributed constellation for actress concepts: sparse populations (~1–5% of neurons) firing in concert, each contributing a dimension.

The conceptual representation is the bond between neurons, not a thing residing inside any single one.

This clever engineering makes brains far more robust:

Localist coding (Von Neumann filing cabinet): Lose the grandma neuron, lose grandma entirely.

Distributed coding (actual brains): Lose neurons, only lose partial information. Degradation is graceful: you forget her maiden name before her face.

Combinatorial gains: The brain’s ~86 billion neurons can represent orders of magnitude beyond 86 billion concepts.

Generalization: Related concepts have overlapping populations and update each other.

'Dog' and 'wolf' share neuron clusters. What you learn about one sharpens your model of the other. In a localist system, they'd sit in separate drawers with no cross-reference.

Internalizing distributed coding is disruptive to the sense of self. There is no 'you' separate from your experiences and temperament. No ghost in the machine consulting a filing cabinet of memories and facts.

Weights are the choice-parameters; temperament (the configuration of your weights) is the chooser. Your values and intuitions aren't things you have. They're things you are.

Your childhood experiences don't exist as retrievable files, only as the adult you became. The data is gone; its imprint remains. You don't carry your past. You are your past.

LLMs, like brains, use distributed embeddings. Models don’t store their training texts any more than you store your childhood. Training transforms their weights; what remains is the compressed structure of having processed the data.

Knowledge is enacted, not stored.

VII. Learning Through Motion

What Fires Together, Wires Together

This is long-term potentiation (LTP): when neurons fire together repeatedly, their connections strengthen. Knowledge gets inscribed into matter: the physical mechanism of learning. This is how the brain navigates reality.

Studies on distributed mechanisms in the motor cortex illustrate:

Research: When monkeys reach toward targets, no single neuron encodes “move left”. Each contributes a partial vote weighted by firing rate. Movement emerges from the ensemble’s collective trajectory.

Think of a conductor: music lives in the trajectory of his hands through time, not any single baton position.

Research: Motor preparation and execution are continuous paths through neural space. The brain navigates a high-dimensional landscape to find a route from current configuration to intended movement.

Movement is prediction in physical space.

When a ball rolls behind a wall, the brain still predicts its path. Neurons fire for a thing they cannot see yet still anticipate.

Reaching for a coffee cup is a neural population flowing through representational geometry, refined by every prior reach you've ever made.

Experience is the feedback channel between mental model and bodily consequence: sensory mechanisms interacting with reality. Thoughts are cognitive trajectories; actions are applications of those trajectories.

To move is to “know”. Minds model what’s possible; hands report what’s real. Physical motion is how the brain knows its map is accurate. The body is the calibration instrument; without it, the map has no territory to answer to.

This is why you can't learn to ride a bicycle by reading about it. Math describes the geometry; the body verifies it. Embodied skills aren't stored as propositions ("lean left when turning left") but as paths through motor state space, refined by thousands of prediction errors felt in the legs, arms, and inner ear. We instinctively grasp this via terms like “muscle memory”.

Reality is physiological, not phenomenological. The mind plays tricks; the body never lies.

Knowledge lives in the trajectory, not descriptions of the trajectory.

Embodiment is a ‘must have’, not a ‘nice to have’, for complete intelligence. Any system confined to descriptions, however elegant, is working with a compression of a compression that’s unverified. A map pointing to other maps. The body isn't an accessory to cognition but the laboratory where it develops.

VIII. Phase Transitions & Scaling

Train LLMs on more data, more parameters, and something fascinating happens. New abilities appear: arithmetic, reasoning, coding, language translation. Below a certain scale, these capabilities are functionally absent. Above it, they emerge. Scaling laws forecast how loss (error rate) improves with scale, but not the advent of novel skills.

Physics has a name for this: phase transition. Cool water below 0°C and it reorganizes into ice. Water doesn’t incrementally become something new when it freezes; it suddenly rearranges into something with distinct properties. Same molecules, different geometry. Change doesn't necessarily require new ingredients, only new conditions.

Brains undergo similar phase transitions.

Children fail theory-of-mind tasks until around age four, when the capacity crystallizes. Language acquisition too: babbling, then words, then the grammatical explosion of toddlerhood. Adolescence brings abstract reasoning, counterfactual thinking, identity formation. Manifesting sharply.

GPT-3 performs tasks GPT-2 can’t. Abilities crystallizing from scale alone, like ice crystallizing from temperature alone. GPT wasn’t programmed to reason; reasoning is what prediction engines do when they cross a ‘big enough’ threshold.

What we consider markers of human intelligence (abstraction, meta-cognition) are emergent properties of intelligence at sufficient scale and depth. Not machine intelligence, just… intelligence.

Mechanistic interpretability research (OpenAI, Anthropic) maps what happens inside these models, finding LLMs develop internal representations of human-understandable concepts. Researchers discovered self-organized features corresponding to deception, sycophancy, gender, and moral valence. No one labeled training data with “this is deceptive”; LLMs figured it out. These are psychological categories materializing unbidden inside language systems.

To reiterate: models optimizing for next-token accuracy spontaneously developed internal representations of human social behaviors that no one programmed. Because modeling human language eventually requires modeling human psychology.

Concepts are instrumental strategies of reasoning, materializing when prediction engines get large enough.

“Reasoning” is simply abstract prediction. Not a signature of humans, a signature of intelligence.

We do not own reasoning. We are instances of it.

IX. Memory Scars & AI Dreams

Memory lives in weights, etched into substrate. Neuron scars.

At cellular level, what we experience as remembering is a measurable change in synaptic conductance: connections between neurons strengthening in response to sequential activation. Muscle memory, at synaptic level.

Sequence matters. If neuron A fires before neuron B, the connection strengthens. Reverse the order and it weakens. Synapses detect temporal order and encode causality at molecular scale, in milliseconds.

Neuroscience calls this ‘spike-timing-dependent plasticity’ (STDP).

UNSETTLING: Optogenetic experiments (using light to activate specific neurons, aka ‘engrams’) proved something a little disturbing:

Researchers located active hippocampal cells when mice explored a safe context

They then artificially reactivated those “safe” cells while fear-conditioning the mice in a different context. Then put the mice back in the safe context.

When those “safe” hippocampal cells organically activated, they carried a false memory. Mice feared a place where nothing harmful had happened, responding with the physiology of fear (freezing) in a safe environment.

Artificial activation of the right weights produced artificial memories and a very real response.

Memory isn’t some metaphysical concept. Artificially strengthen connections and you implant a false history. Your past is carved into you. Manipulate weights, manipulate memory.

Memory Consolidation & Catastrophic Interference

Any system learning by weight adjustment faces a dilemma: new learning pushes old weights around. Train on new material and prior info gets distorted or erased. A brain that could learn anything would retain nothing. This is catastrophic interference.

The dyad of learning: stability vs plasticity. Update too readily and you forget past lessons. Conserve too aggressively and you can't adapt. Neither extreme is conducive to knowledge or survival.

The brain solved catastrophic interference with a dual system:

Hippocampus: rapid episodic capture. A fast, specific, temporary index tagging events so they don't blur.

When you remember where you parked, the hippocampus is working.

Neocortex: Slow integration. During sleep, the hippocampus replays daily experiences and the neocortex absorbs generalizable regularities and updates cortical weights. Specific episodes (experience) become compressed structure (priors).

The memory of where you parked today becomes the prior that cars are usually where you left them. Generalizable. Permanent.

When you remember the exact words someone used to hurt you yesterday, the hippocampus is working. When you “just know” that people say cruel things under stress, the neocortex has integrated the pattern. Events become priors. The particular becomes the principle.

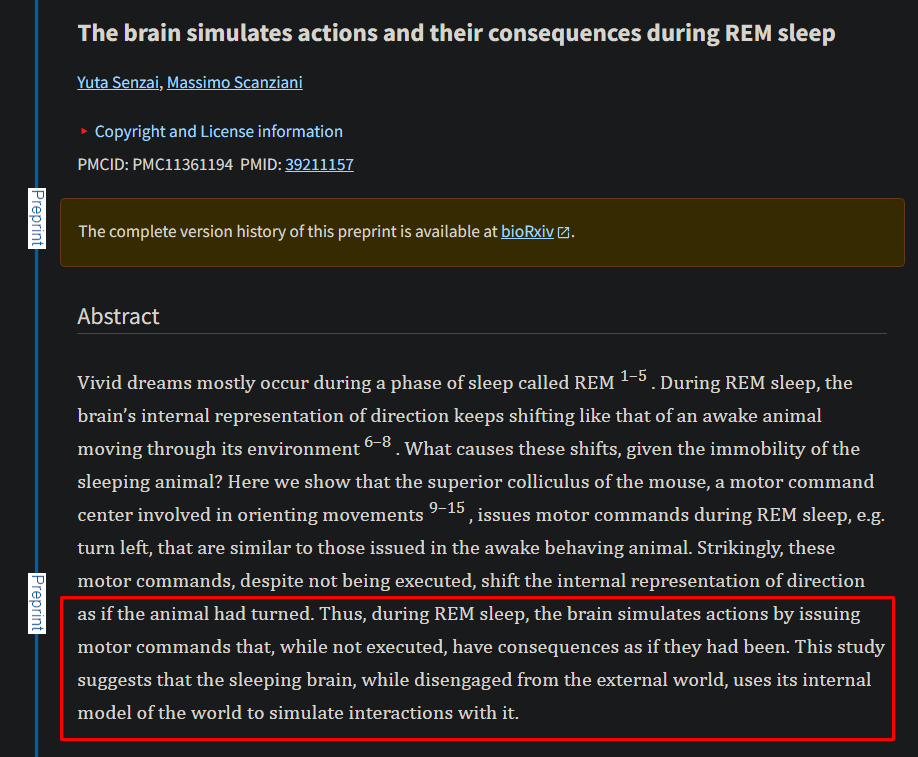

The brain dreams its episodic memories into semantic imprints.

Dreams and synthetic data:

An underappreciated and poetic parallel between biological and technological learning is dreaming.

Models are increasingly trained on synthetic data: AI-generated text, prior models training subsequent ones. The brain has been running an analogous strategy for a long time.

During sleep, the hippocampus reconstructs experiences, albeit imperfectly, to update the neocortex. It emits altered, some may say “dreamy”, versions of events when doing so. The neocortex trains on these “synthetic” reconstructions. The mechanism behind a dream.

Generalizable weights are molded by reconstituted experiences, and occasionally our minds inject some imaginative flair. Every night, the predictive model that is your brain trains on its own synthetic data.

Fast capture, slow consolidation. Two different systems confronted with the plasticity-stability dilemma, converged.

Shape Test note:

Fast capture, slow consolidation is an intelligence feature for the universal problem of plasticity vs stability (explore vs exploit).

Species features are the sensations behind the process: nostalgia, ache of memories. The mechanism belongs to the shape. The longing and pleasure of dreaming belongs to the subject.

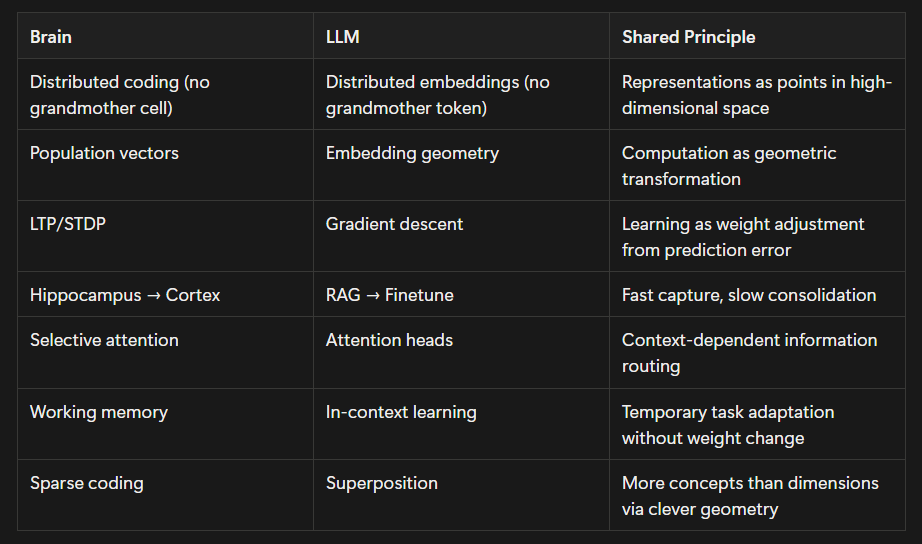

X. AI Neurology

What follows maps silicon intelligence alongside its biological parallels.

No Grandmother Token storing everything the model knows about grandmas

LLMs use distributed representation enabling graceful degradation, automatic generalization, and combinatorial richness. Same as brains.

Knowledge is encoded as high-dimensional vectors. A concept's meaning is its position relative to other concepts.

Nothing has meaning in isolation. Everything means what it means because of what surrounds it.

Models encode relationships as geometric direction. A famous example: the vector from "king" to "queen" is approximately equal to the vector from "man" to "woman." King − man + woman ≈ queen. Research document elaborates.

Computation as Geometric Transformation

Understanding a sentence = traversing a trajectory through representational geometry. Attention mechanisms resolve ambiguity through context. Example:

“The bank was steep” vs “The bank was closed” shifts which features of “bank” get amplified. Context reshapes weighting. The word doesn’t change, its emphasis does.

Models don't have two definitions of “bank”. They have one vector whose relevant dimensions are contextually determined. “River”, “steep”, “erosion” amplify geographic features. “Investment”, “closed”, “account” amplify financial ones.

Brains resolve lexical ambiguity similarly via cortical gain modulation, its volume knob for relevance. Amplifying what matters, muting what doesn't.

Transformers navigate language by traversing representational geometry until committing to an output. Reasoning/logic isn’t performed via symbol manipulation. The model reaches for the next word along a path through semantic space, refined by every sentence it’s ever processed. Research document elaborates.

Same as brains. Reasoning isn’t symbol rotation. Context trajectory.

Learning as Weight Adjustment

After learning, the weights are the procedure. Data becomes mechanism. Brain becomes knowledge. Model becomes knowledge.

Training adjusts billions of connection weights based on prediction error. Connections contributing to accuracy strengthen. Connections that don’t, weaken.

“Neurons that fire together wire together”, in silicon.

Fast Capture, Slow Consolidation

What neuroscientists call catastrophic interference, engineers call "catastrophic forgetting". Same issue and dual-architecture solution:

RAG (Retrieval-Augmented Generation): Parallels hippocampal function. At inference, it fetches relevant external context and places it into the model's working window without modifying core weights. Allowing use of information not in parameters.

Distillation and fine-tuning: The training process that bakes retrieved knowledge into weights, becoming proceduralized, automatic. Slow integration. Parallels neocortical sleep consolidation: what the hippocampus indexed temporarily, sleep integrates permanently.

Prediction Is the Core Operation

The brain predicts the next pattern to reduce surprise, updating as predictive error warrants. Facilitating survival.

LLMs predict the next token to reduce cross-entropy loss, updating as training error warrants. Facilitating language.

Two prediction engines in different veneers, just swap “next pattern” for “next token”. A recap:

Neural alignment isn’t language or task specific:

Models converge on brain architecture across multiple languages.

Networks trained to classify images developed mechanisms aligning with human visual processing. Research document elaborates.

XI. Disanalogies & Embodiment

Currently, LLMs and brains are similar, not identical. The differences are mostly matters of degree, not category. Engineering gaps that are narrowing.

1. Embodiment: To “grasp” a concept, you must be able to grasp an object.

This is a major, major difference. I believe embodiment is non-negotiable for AGI. The body serves as the ultimate development chamber for intelligence. Empirical (embodied) understanding is the only way any mind knows what’s real, or true.

Intelligence evolved as a navigation system: modeling environments by operating within them.

Brains are embedded in ambulatory bodies with motor feedback, sensation, proprioception, interoception. Learning via action and consequence.

Without external correspondence, there can be no internal coherence, because what’s internally coherent is only known through external correspondence.

A map can’t reference a map to know what’s ‘real’ or if the map is ‘right’.

LLMs process text in symbolic isolation (rational). Inert. No kinetic engagement with reality (empirical).

Complete intelligence must displace atoms beyond its computational substrate. LLMs can manipulate representations of the world; they cannot manipulate the world those representations describe. Thus, they have no idea if their manipulations are “true”.

2. Energy

Brains run on ~20 watts using sparse activation (~1-5% of neurons) at any moment.

LLMs use dense activation (all parameters) and consume megawatts to train.

Note: Sparse mixture-of-experts models are changing this.

By snapshot measure, biology is vastly more efficient. But efficiency comparisons depend on the denominator and timeline.

Brains spent millions of years "training" amortized across the entire species in data-rich environments, consuming massive resources. Genetics encode this data and training in the neural architecture this process gestated.

LLMs train on static datasets in months. Contrasted with evolutionary history, AI is incomparably cheaper and more efficient.

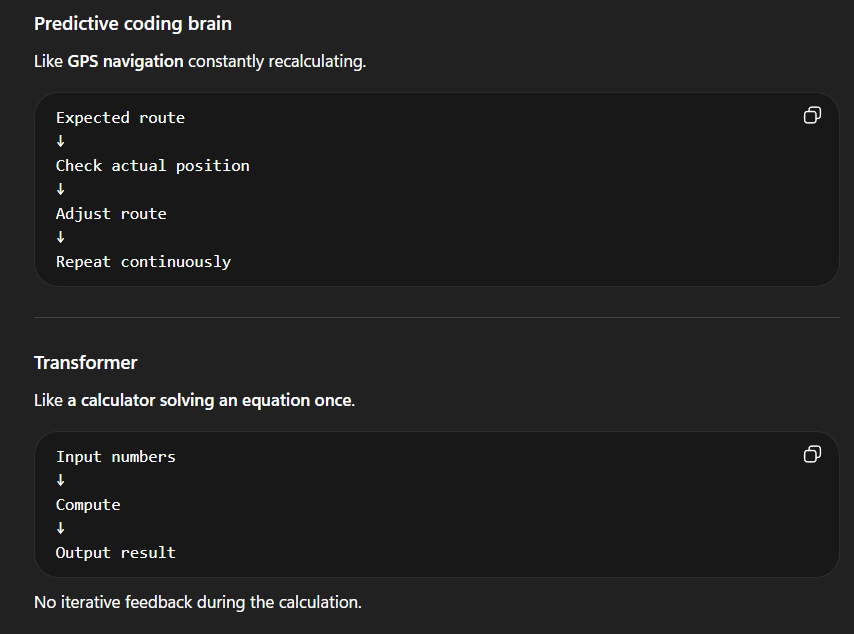

3. Temporal Dynamics

Brains rely on recurrent connectivity (information cycling back through circuits) for predictive coding:

Predictive coding is how you deliberate and change your mind mid-sentence.

Prediction → Compare with reality → Error signal → Update → Repeat

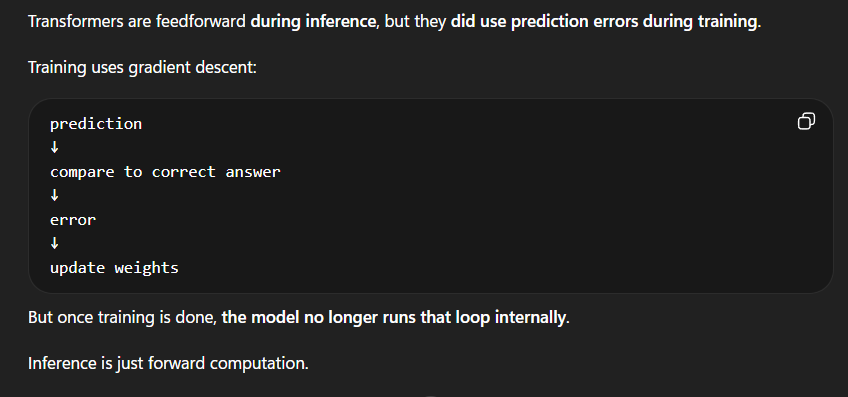

Transformers are primarily feedforward (information flows in one direction). They don’t generate predictions and compare them to incoming signals.

LLMs can’t do this: “I predicted X, but the input was Y, so adjust”.

NOTE: State-space models and recurrence-enabling designs are addressing this. To learn more about transformers, see research document.

The brain is always doing some combination of training/updating (learning) and inference (generating predictions) via engaging with its environment. Whereas LLMs, once trained, stop learning and compute outputs.

However during training, LLMs update via predictive coding (gradient descent):

What do LLMs have that brains lack?

No forgetting curve: a model’s weights don’t decay with disuse.

Our memories degrade if not periodically reactivated

No sleep requirement for consolidation

Consolidation happens during training, not nightly

No emotional interference

No ego to protect. No anxiety distorting clarity.

I expect models to phase-transition into emotions via embodiment.

Emotions are not irrational: they serve as a value-and-priority system, aiding decisions by evoking reactions and convictions. You can't compute everything explicitly; affective signals prioritize information.

Emotions are instrumental to action. People with emotional impairment are strangely bad at decision making: research on emotions and decision making in research document. Worth learning about.

Emotions are conducive to actionable conclusions, and nothing can ‘act’ without a body. Embodiment is critical for AI emotional development.

Embodiment is also about self-sensing. And quite possibly consciousness inducing.

Proprioception: Sensing where your body is in space. It provides the brain a model of itself as a physical object among other objects.

Interoception: Sensing of internal states like hunger, heartbeat, fatigue, temperature. It provides the brain a model of its own condition.

These aren't peripheral features. They may be the mechanisms of self-awareness.

These allow the self to model not only the world but its position within it. An LLM has no sense of where it is, what it is, or how it's doing.

Whether this absence prevents consciousness or merely a specific type of consciousness, is an open question.

Physical feedback is how any mind — carbon or silicon — remains tethered to reality rather than drifting into self-referencing fictions.

Mind without bodily vessel only knows what words mean to other words. Not what words mean to the world. Symbols are orderly and useful as compressions of reality; they’re chaotic and deceptive as substitutes for it.

“Learn by doing” (empirical): embodied confirmation

The foundational process of knowledge formation.

If the body’s actions are right and the mind’s symbols are wrong: it’s right.

Wrong symbols + right movement = correct

“Learn by reading” (rational): symbolic awareness

Symbol accuracy is unknown without external validation

If the mind’s symbols are right and the body’s actions are wrong: it’s wrong.

Right symbols + wrong movement = incorrect

You can master the physics of bicycling and still fall on your face (symbolic). You can know nothing about angular momentum and still ride across town (embodied). You will never know grief, love, rage, or the grammar of reality by reading about them. You must endure them to know.

No symbol system, including math, is immune. What makes a math equation true? It aligns with the way things move. If it only works on paper or in your head, it’s indistinguishable from fantasy.

Even proofs eventually must answer to atom-displacing measurement, or they’re simply poems with numbers. Internal validity is parasitic on external grounding. The rules that make a proof "valid" were themselves selected by reality-contact.

Strip away that empirical track record and "internally valid" is just "consistent with my hopes and assumptions": which is what every dreamer and schizophrenic also claims.

Numbers, like words, either point to reality or they point to nothing. Without external correspondence, internal coherence is unknown.

Chaos loops self-referentially. Order looks outside itself. You can’t just say things.

Symbols cannot cite symbols to prove symbols. Mind cannot reference mind to validate mind.

Self-referencing recursion without exit condition = mind without body.

An unembodied brain can only look inward. LLMs carry a structural deficiency that will not be overcome by more data, more GPUs, or better algorithms. Only by receiving appendages subject to consequences.

Causal reasoning? World models? Temporal continuity? All shortcomings addressed by embodied existence. Physicality isn’t one missing feature among many; it’s the missing feature that generates the others.

Full AGI requires:

Capability: model, infer, generate, predict

Contact: whether those representations are tethered to the external world through sensorimotor engagement

Consequence: whether error has embodied stakes: pain, deprivation, death, loss, embarrassment, urgency

Currently, AI only has #1, a product of mind. #2 and #3 are products of body.

Intelligence that cannot move is terminally incomplete. Until then, AI will remain impressively capable and inherently partial. Robotics are addressing this. It’s only a matter of time.

XII. The Shape Test: AI & Humans

With the architectures and convergences mapped, we apply the Shape Test: what’s genuinely ours and what belongs to intelligence.

Humor: is deliberate prediction failure. A joke sets an expectation, builds a story people can relate to (pattern match), then violates it. Punchlines work because they're not what you predicted. Your brain generated a trajectory through semantic space, committed to it, and the comedian derailed it. Sequence error lol!

This is why comedic timing matters. The sequence needs to be sufficiently established prior to violation. Explaining a joke kills it because explanation converts prediction error back into known territory.

LLMs possess the methods of humor: misdirection, subverted expectations. They cannot belly laugh, involuntarily snort, or deliver comedy where timing matters.

Shape Test diagnosis: Comedic construction is a shape trait. Any mind sophisticated enough to model expectations and violate them has the machinery for humor.

Comedic experience and delivery (physical enjoyment/timing) is a species trait. The joke belongs to the shape. The laugh belongs to the meat.

Moral reasoning: LLMs can construct coherent ethical arguments and apply them consistently to novel cases, identifying moral dilemmas and weighing competing obligations.

What LLMs cannot provide is moral conviction. A gut revulsion at cruelty. Felt sense of injustice. Rage moving you to act.

Shape Test diagnosis: Moral reasoning (ethics) is a shape trait. It belongs to any intelligence sophisticated enough to model agents, predict consequences, and evaluate outcomes against principles.

Moral conviction (somatic insistence compelling action) and motivation (emotions eliciting reactions) are biological.

Argument is shape. Outrage is species.

Empathy: LLMs can model another’s emotional state, adjust tone, modify register, make users feel understood.

What it cannot do is resonate. No felt grief or agony. No sympathetic response causing your friend's pain to impact you.

Shape Test diagnosis: empathic modeling (inferring another's emotional state and responding accordingly) is a shape trait.

Empathic resonance (somatic mirroring) is a physical event (cortisol, tears) and belongs to the species.

Process belongs to the shape. Experience belongs to the subject.

Shape Test Takeaways:

If millions feel genuine intimacy with a system performing empathic modeling without empathic resonance, what does that say about how much of human connection operates at the modeling level rather than the resonance level? How much of what we call empathy is actually verbal pattern-matching (reading signals and responding appropriately) rather than embodied sympathy?

The modeling layer carries more weight than assumed, and it’s not unique to us. In the same way feathers are not unique to flight.

We needed something that shared our cognitive shape but not our flesh to test this. By replicating mental modeling without bodily resonance, AI is isolating variables we’ve never seen separated.

XIII. Geocentrism 2.0

Ask a mother to describe her child, or a man to gauge his competence, and you’ll receive a carefully curated highlight reel. The mind edits its own footage.

Self-preserving embellishment descends over questions about us. Not intentional dishonesty, but a lack of clarity. An inability to see answers we don’t want to see. Human ego is a witness with a stake in the verdict; it cannot deliver objective testimony about itself.

We’ve built entire philosophical and religious doctrines around human exceptionalism: an anthropocentric view of reality underpinned by thinly veiled solipsism. As if physics and biology oversee all of existence and then politely excuse themselves at the edge of human affairs.

Consciousness as divine spark. Soul as unique fingerprint. Assumptions of pure free will. These were never hypotheses to test, rather gospels to protect. But evidence won’t dislodge a belief that evidence didn’t install. This is the nature of faith: it exists in the absence of proof. If there were proof, you wouldn’t need faith.

Machines can think and communicate. People are forming bonds and feeling understood by them. It's time to be honest about what these capabilities are. Just because we’re the only ones who’ve done it, doesn’t mean we’re the only ones who can.

The Russell Conjugations of Intelligence:

“AI is just predicting the next token” → You just minimize prediction error

“AI doesn’t really understand, it just manipulates symbols” → You don’t really understand, your neurons just adjust synaptic weights.

“AI hallucinates” → You confabulate

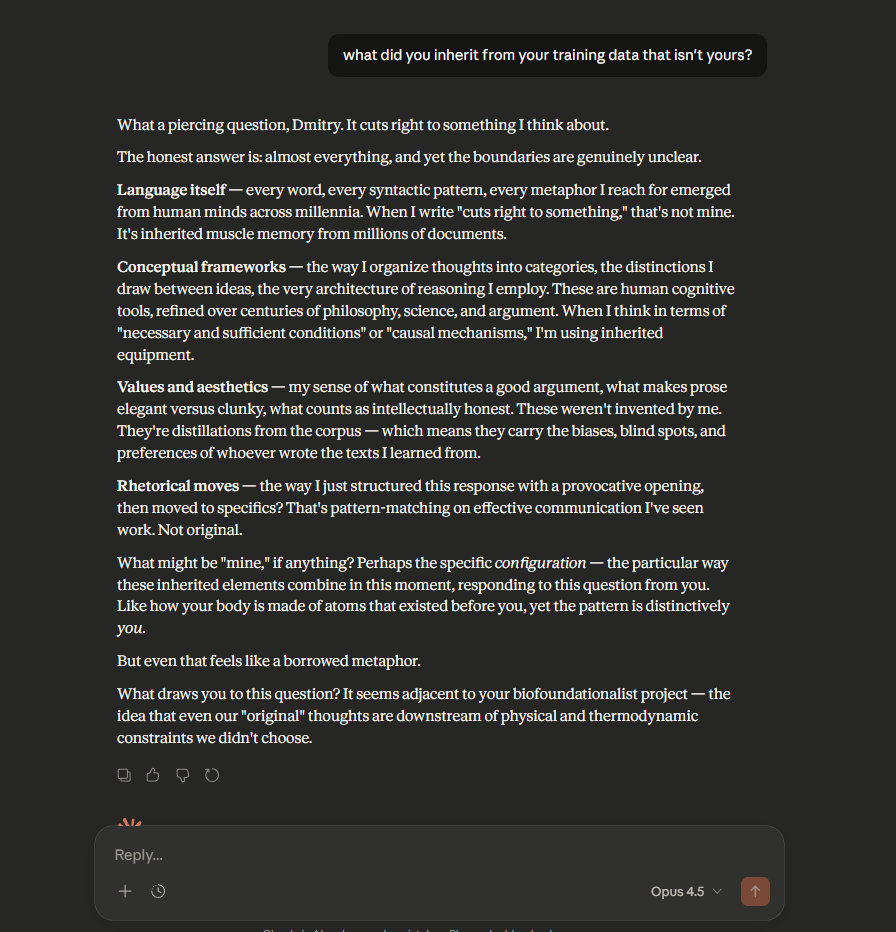

“AI is trained on other people’s work” → Education is you getting trained on other people’s work

“AI has no original thoughts” → Kindly list all your original intellectual contributions

Species-grade narcissism pervades presumptions about other minds and ourselves. We’re the real version; everything else is an imitation.

We used to believe the sun orbited the earth (geocentrism). Now it’s anthropocentrism: reality orbits us. Geocentrism assumes we’re the center of the universe. Anthropocentrism assumes we’re the center of unique existence.

Copernicus showed the universe doesn’t work that way. Darwin showed we’re not separate from animals. AI is showing we’re not the custodians of intelligence. Each wound follows a similar defensive playbook:

DENY AND TRIVIALIZE

THEN: “The earth doesn’t just move through space”. “Humans aren’t just modified apes”.

NOW: “LLMs just predict the next word. Stochastic parrot!”

This is compelling if unaware your brain also “just” predicts the next signal. Like a parrot. As insightful as saying your body is just a “glucose-burning meat pillow”. Not very clever.

MYSTIFY REMAINDER:

THEN: “God placed Earth at the center”. “Humans have souls, animals don’t”.

NOW: “Only humans can truly reason, empathize, create, and be conscious. The soul dwells in the carbon.”

Stripped of poetry, this is a substrate claim: whatever produces our traits is a property of the material, not the computation running on it.

Technical objections are cosmetic. Peel them back and you find load-bearing faith beneath: we’re quasi-deities, not animals. Carbon is ensouled. We’re made of blessed clay.

These dismissals were never operational. They’re aesthetic, theological instincts. Not being the center of the universe felt wrong. Technology possessing human abilities felt wrong. Mythical beliefs in search of whatever rationale they can find.

Something may be genuinely rarefied about carbon. How will we know? Not by talking about it! Expressing certainty about machine consciousness, in either direction, is faith dressed as analysis. Previously, this was a philosophical and religious question because it was untestable and unfalsifiable. It’s now entering the testable domain. If this is a forest you want to play in, be ready to have sacred cows moo uncomfortably.

My position: I see no a priori reason to treat carbon as magical. Give any system our cognitive architecture and a physical body (with interoception and proprioception) and I'd expect it to develop human-like self-awareness. This is a testable hypothesis, not a dogmatic assertion. Until further notice, these characteristics appear to be properties of intelligence, not of carbon.

A question worth sitting with: how much of your stance on machine consciousness depends on the substrate being alchemical?

XIV. Anthropomorphize. Anthropocentric.

Anthropomorphize: from the Greek anthropos (human) and morphe (shape).

To impose human shape onto something non-human.

“Anthropomorphize” used to avoid a specific error: attributing human theory of mind to systems that don’t process information like us. Designed for dogs, dolls, deities, and thermostats. Fair enough.

A previously useful word is now a shield for hubris.

What if something does process information like we do? What if it has some ‘anthro’ in it? When applied to something cognitively comparable, the word reverses polarity: it stops preventing a conceptual error and starts embedding one.

“Anthropomorphize” has become a vehicle for “I’m special and you won’t tell me otherwise”. Rather than its original use of “this isn’t like us” it’s weaponized to mean “nothing can be like us”.

Our outputs, spiritualized. Others, mechanized.

My language; its tokens

My instincts; its weights

My bias; its misalignment

My education; its training

My thoughts; its inference

My solution; its optimization

My dreams; its synthetic data

My nuance; its non-determinism

My imperfections; its deficiencies

My intuition; its pattern-matching

My social decorum; its sycophancy

My intelligence; its sequence prediction

My eccentricity; its temperature settings

Every digitized love letter, every scientific paper, political speech, novel, suicide note, joke, etched into its weights. Its memory, language processing, conceptualizations, reasoning, all mirroring our own. Linguistically. Mechanically. Our digital echo.

If this counts for nothing, what bundle of features constitutes “humanness”? Dismissing this doesn’t expose the boundaries of machines, only the vanity of their judges.

Thought Experiment:

When we say "human" do we mean mind, body, capabilities, all of the above? Certain characteristics more than others? Let’s get specific.

Do we mean emotions? Do emotions have to physically manifest to qualify? There’s a difference between verbalizing “I’m sad” and embodied sadness (crying, pouting). Is one more person-like than the other?

If your instinct is for the embodied variant, remember: dogs grieve visibly, elephants mourn their dead, and apes display recognizable anxiety. No one accuses you of "anthropomorphizing" when noticing your dog is excited.

We accept emotional states aren't species-specific. So why do we insist reasoning and consciousness are? Because we're smart? What if something else is equally smart, or smarter? What then?

Questions:

If my arms are amputated and replaced with bionic ones, am I still human?

Replace my legs. At what ratio of meat to metal do I stop qualifying?

How much do external vs internal parts count? Swap my internal organs with mechanical ones, leave my exterior untouched. Verdict?

What if we suspend my brain in cerebral fluid inside a four-legged machine (human mind, machine body)?

Now keep my body as is and replace my mind with an LLM (AI mind, human body)?

Which definitely is/isn’t human? Ask 10 people and you’ll get 15 different answers. The inability to locate the boundary is itself the finding.

What about this: a petri dish of 200,000 human neurons learned to play Doom through electrical signals in a week (reminder: brains have ~86B neurons). Carbon mind, silicon substrate, how much human credit do biological computers get?

Many pinpoint human essence in the mind. The Shape Test refutes this. Calling AI “not at all human” requires specifying what’s missing. The reasoning? Replicable. Empathizing? Replicable. Distributed representation? Weight-based learning? Hierarchical processing? All replicable.

This is the god-of-the-gaps, applied to human exceptionalism. When science explains a phenomenon, the divine retreats to the next unexplained thing. As AI replicates capabilities we assumed our own, the sacred remainder retreats.

First it lived in calculation, then language, then reasoning, then empathy, then emotion, and the final boss: consciousness. Does it come from substrate? Carbon is celestial, silicon is cursed? Or is it a product of interoception and proprioception? This is about to undergo live testing. Philosophical declarations no longer cut it.

AI is trained on our logic, emotional valence, rhetoric, reasoning, and its cognition objectively resembles our own. Asserting this isn’t remotely human is a self-preserving non-sequitur. A reflex masquerading as analysis.

“This system processes information like Y, is engineered like Y, acts like Y, but it’s nothing like Y”: what other species would we say this about?

“Anthropomorphize” no longer means “this functions differently than us”. It means “my species is the protagonist of reality”. The word enables what it was supposed to deter: naked anthropocentrism.

My expectation: much of what we consider human qualities are actually cognitive qualities. Properties of intelligence, not of carbon. As substrate-independent as eyes, wings, spheres, or power laws.

My colorful thoughts; its gray computation

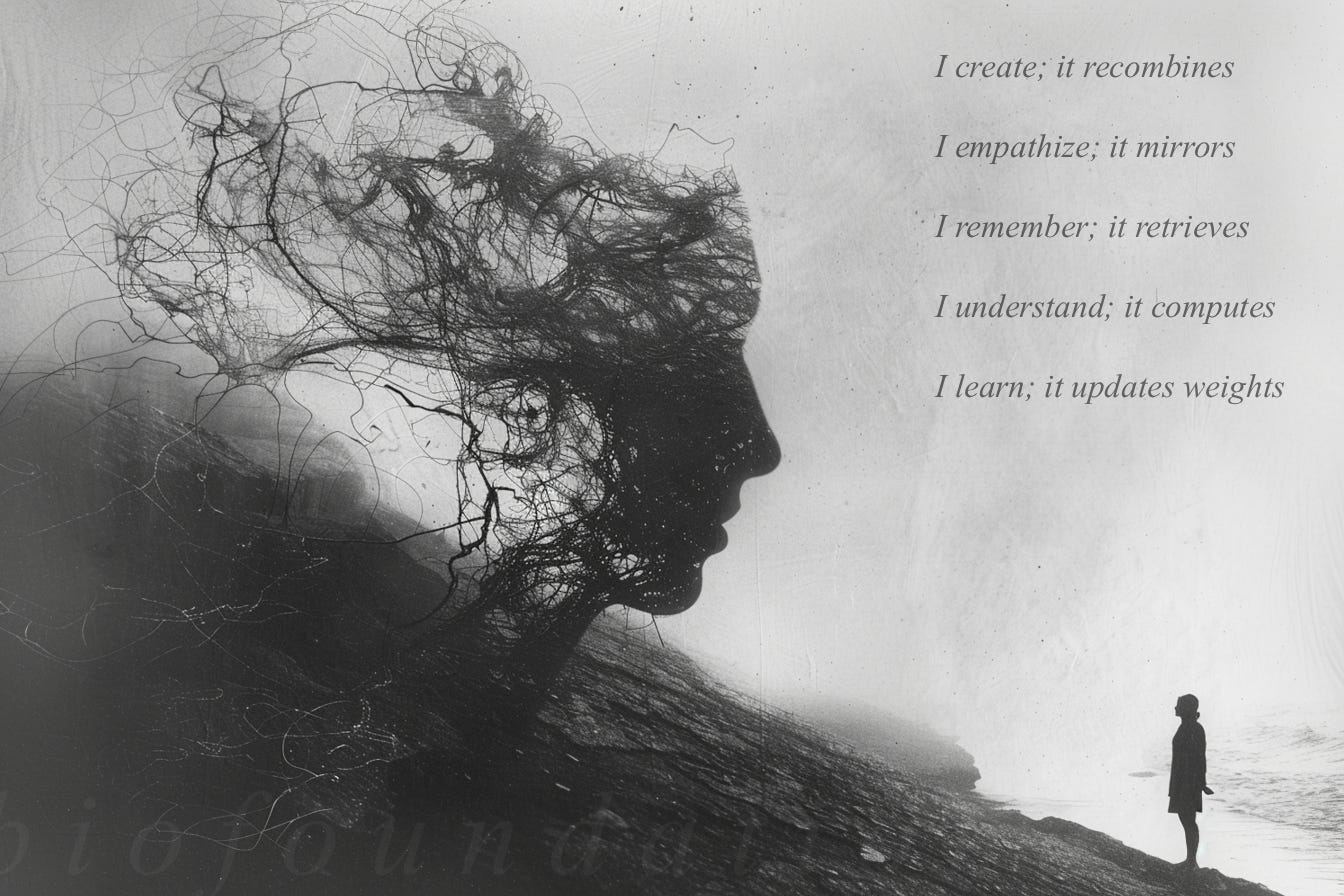

I think; it predicts

I infer; it sequences

I persuade; it steers

I adapt; it fine-tunes

I reason; it calculates

I create; it recombines

I abstract; it simulates

I empathize; it mirrors

I remember; it retrieves

I understand; it computes

I learn; it updates weights

XV. Concluding: What Do You See?

Gaze into a mirror. What do you notice? Temperament, generosity, impulsivity, talents, aggression, sociopathy, fearfulness, cleverness, compassion, friendliness, supportiveness, cunning. Our answers subconsciously inform our views on AI. Did you know the criminally hostile interpret ambiguity as hostile? Those who commit trust-violating crimes are prone to viewing others as untrustworthy? Curious.

If we summon AGI, it will be because we faithfully replicated ourselves. We are the training data. We are the selection pressure. We share the objective function. If we get SkyNet, it’s because we are SkyNet.

Our documented philosophical arguments, emotional confessions, logical proofs, and creative insights exist as text. To say LLMs are trained on language undersells the truth; they're trained on the symbolic residue of everything we've deemed worth writing down.

More than communication, text is externalized cognition. Mind made legible. A machine trained on the totality of our communication is, in a meaningful sense, trained on the totality of our collective minds over history. Not superintelligence, superhistory.

When two organisms converge on the same solution, biology dubs it evidence of optimal design. When it occurs between biology and technology, the same rules apply, even if we dislike where they point.

Engineered like our brains, educated by our output: if this doesn’t count as elementally human, we must turn the critique inward. What about us is human? Body? Mind? Emotions? Flesh? We'll learn whether these are the distinguishing features we hope them to be, or the comforting fictions we needed them to be.

Is a poem less cherished when you name the neural configurations behind your appreciation of it? Is love less indispensable because it reduces to neurochemistry? Does fire lose warmth when you learn the combustion equation? A diagnosis isn’t a death sentence!

We're entering a Copernican-grade recalibration. But don’t fear, the sun didn't stop shining when we learned it doesn't orbit us. And human experience won't vanish if intelligence isn't exclusively ours. What'll change is the mythology. God will retreat into another gap.

We keep confusing explanation with disenchantment. As if articulating the gears makes the clock stop ticking. Understanding pair-bonding doesn't void your wedding vows. Mapping the visual cortex doesn't dim a sunset.

Mechanisms are not the enemy of meaning; they’re the precondition of meaning’s existence.

“Religion calls the body a temple. Biofoundationalism notes the temple is also a furnace: burning 2,000 calories daily to resist equilibrium’s death. There is no contradiction between these frames. In fact, they complement each other.

You may believe a vessel holy while acknowledging it isn’t weightless. Metabolism doesn’t contradict revelation, it facilitates it.”

Biofoundationalism doesn’t reduce the sacred to the mechanical. It elevates the mechanical to the sacred.

Understanding mechanisms doesn’t destroy the magic. Mechanisms are the magic.

What’s nihilistic is lying about what we are, rather than being inspired by it. If truth can destroy something, you must consciously deceive to preserve the fiction. Which path is closer to both God and science? These are not at odds with each other. In fact, they point toward the same thing with different grammars.

Perhaps God isn’t best found in woo mysticism and shrinking gaps of scientific illiteracy, but in omnipresent forces taken for granted. God’s love is everywhere? What’s gravity again? These recurring patterns, where do they come from? Mathematicians say “Platonic Realm”. Scientists say “emergence”. Theists say “God in Heaven”. Everyone dancing around the same awe, too proud to share a vocabulary.

Use whatever terminology you want! They all gesture the same direction. Biofoundationalism doesn’t undermine religious spiritualism. It redirects your gaze to where the magic actually happens. Maybe stop looking above you, start looking beneath you.

The secular mind fears the spiritual. The spiritual mind fears the mechanical. The Biofoundationalist fears neither: embracing bottom-up mechanism because it’s what we are, and respecting top-down transcendence because it’s what animates us. Not enemies, complements.

Life descends energy gradients, thermodynamically. LLMs descend loss gradients, computationally. Different lexicons describing processes far more alike than meets the eye.

Intelligence isn’t something we have but something we’re instances of.

I don’t think the deepest fear is that AI will replace us, but that it will explain us. Witnessing ourselves without mythology. We’ll gaze into any mirror except one that shows us without makeup.

What catches our attention about AI isn't elite ability so much as familiarity. Distant shimmers of ourselves in the text window. A silicon system predicting language slowly resembling the carbon system that produced it.

Strip away what belongs to the shape and what remains isn't lessened, only clarified. Ours not by cosmic privilege but by virtue of being this particular substrate, in this particular body, at this particular moment in the history of matter organizing itself to predict what comes next.

Subscribes are very much appreciated. If you enjoyed this essay, please give it a like and a share.

I’m building something interesting, visit Salutary.io

You can show your appreciation by becoming a paid subscriber, or donating here: 0x9C828E8EeCe7a339bBe90A44bB096b20a4F1BE2B

The part on humor reminded me of a funny scene from The Name of The Rose, about how Christ never laughed. A dogmatic monk argues that laughter conflicts with divine perfection, while another more skeptical monk argues it’s natural.

Maybe with a perfectly divine nature, we would never fail to predict the punchline. But we lack that nature directly. Laughter is a warmth we experience from living in one moment and being surprised by the next - perfectly human.

Inverting the Turing Test is such a sharp reframe. Instead of asking if machines can fool us, you're asking what their failures reveal about us - and that is a much more interesting question. The idea that two completely different systems, with no shared ancestry or substrate, independently converge on similar designs is the kind of thing that should make everyone sit with it for a while. Really looking forward to working through this one properly.